Canadian Cognitive Abilities Test: A Complete Guide for 2026

Apr 19, 2026

The envelope often arrives in a backpack, folded between a spelling sheet and a lunch note. A parent opens it after dinner and sees a formal notice about the Canadian Cognitive Abilities Test, usually shortened to CCAT. The reaction is almost always the same. A few practical questions, then a deeper worry. Is this an IQ test? Is my child being judged? Could one morning at school shape what happens next?

Teachers have their own version of that moment. A classroom teacher hears that a student has been flagged for CCAT testing and wonders how much weight to give the result. Is it a gifted screen, a planning tool, or just another number in a file?

That uncertainty makes sense. The canadian cognitive abilities test sits in an awkward space between assessment and expectation. It sounds technical, and schools often refer to it with very little explanation.

That Letter from School About the CCAT

One mother I worked with described the school notice as “official enough to make me nervous, but vague enough that I had no idea what it really meant.” That’s a fair summary of how many families experience the CCAT.

In real life, the situation usually looks ordinary. Your child’s school sends home a letter saying students in a certain grade will complete the test. Maybe the letter mentions gifted screening. Maybe it notes the assessment helps the school understand learning strengths. If your child already struggles, you may worry the test will confirm a problem. If your child is doing well, you may worry the result will create pressure.

What parents usually ask first

Most concerns fall into a few buckets:

Is this like a report card test? No. It isn’t based on memorised classroom content in the usual sense.

Can my child study for it? Not in the same way they’d study spelling words or multiplication facts.

Will one low result define them? It shouldn’t. A thoughtful school team treats the CCAT as one piece of information.

What if my child is anxious, tired, or distracted? That matters, and it’s one reason results need context.

A school psychologist or special education teacher may view the CCAT as a useful screen. Parents often experience it as a mystery. That gap causes unnecessary stress.

Practical rule: Read the school letter as an invitation to ask better questions, not as a verdict about your child.

Why this feels so loaded

The word abilities can trigger fear. Parents hear it and think fixed intelligence. Teachers hear it and think placement decisions. Children often hear none of that. They just know they’re being given something timed and unfamiliar.

That’s why plain language matters. The CCAT is best understood as a structured attempt to see how a student reasons through words, numbers, and visual patterns under standard conditions. It can be helpful. It can also be misunderstood.

If your larger concern is whether school testing is missing a learning difficulty, this guide to assessment for learning disabilities can help frame the bigger picture.

A good reading of the CCAT starts with calm. Not panic, not hype. Calm. Once you understand what the test measures, the letter from school becomes less intimidating and much more useful.

What the CCAT Is and Why Schools Use It

A parent may look at a CCAT report and wonder what, exactly, the school is trying to learn. A teacher may wonder whether the score reflects instruction, effort, language exposure, attention, or something deeper. The answer is narrower, and more useful, than many people expect.

The CCAT is designed to estimate how a student reasons with words, quantities, and visual patterns under standard testing conditions. A report card shows what a child has learned in class so far. The CCAT looks at some of the mental processes that can support future learning. That difference matters because two children can arrive at the same grade for very different reasons. One may grasp new patterns quickly but struggle with organization. Another may work steadily, practice hard, and perform well even when certain reasoning tasks take more effort.

A Canadian-normed reasoning measure

In Canadian schools, the CCAT is used as a group-administered cognitive abilities test for students across a wide age range. The current Canadian version is adapted for use with Canadian norms rather than relying on U.S. comparison groups. In practical terms, that means schools are comparing a student with similar-age or similar-grade peers in the Canadian school context, not treating the child as an isolated number.

That is one reason schools find the test useful. It creates a common frame of reference when students come from different classrooms, teaching styles, and learning histories. Families who want broader background on how these tools fit within psychoeducational practice can read this guide to cognitive ability assessment.

Why schools use it

Educators usually turn to the CCAT when they need a clearer picture of learning potential, especially when classroom performance raises questions that marks alone cannot answer.

Common reasons include:

screening for gifted programming

investigating underachievement

noticing uneven patterns across areas of reasoning

planning enrichment, intervention, or closer follow-up

Here is the most helpful way to read that list. Schools are not trying to predict a child’s whole future. They are trying to make better present-day decisions.

A strong result can suggest that a student may need more challenge than current classroom work is providing. A mixed profile can point to a student who reasons well in one area but needs support in another. A lower-than-expected pattern can also prompt better questions. Is there a language issue? A processing concern? Test anxiety? Gaps in opportunity to learn? The score does not answer those questions by itself, but it can help the school know which questions to ask next.

That is the bridge many families need. The CCAT is a snapshot, not a growth plan. The useful part begins after the score arrives, when teachers and parents translate the pattern into action. That might mean targeted enrichment, a review of classroom demands, observation for attention or language difficulties, or pairing the test results with newer tools that track learning, memory, and day-to-day functioning more dynamically.

What the CCAT does not measure

This point protects children from being misunderstood.

The CCAT does not measure character, creativity, persistence, curiosity, relationships, or emotional wellbeing. It does not capture whether a child was tired, anxious, distracted, or confused by the directions on that particular day. It also does not tell you how well a student has been taught, how much support they have received, or whether a hidden learning difficulty is interfering with performance.

A high score does not guarantee easy school success. A modest score does not place a ceiling on growth.

Used well, the CCAT helps adults notice patterns in reasoning and respond thoughtfully. Used poorly, it turns into a label. The healthiest approach is to treat the score as one structured clue within a larger picture of cognitive health, classroom functioning, and developmental growth.

Breaking Down the CCAT Batteries and Subtests

The CCAT is easier to understand when you stop thinking of it as one big score and start seeing it as three different windows into reasoning.

Across grade levels, the test includes three batteries: Verbal, Nonverbal, and Quantitative. The total number of questions varies by grade and ranges from 118 to 176, with administration over 2 to 3 hours at roughly 30 to 45 minutes per battery, according to Mercer Publishing’s CCAT overview.

Verbal battery

The verbal battery asks how well a student reasons with language. It is not a spelling bee and not a reading comprehension test in the usual classroom sense. It asks a child to notice relationships between words, ideas, and categories.

The verified scoring guide notes that the verbal battery includes three 10-minute subtests with about 20 questions each, and that it correlates highly with school success.

In plain language, this battery asks questions like:

If two words belong together in one way, can the child spot a similar relationship in another pair?

Can they classify ideas quickly?

Can they reason through verbal patterns rather than recall definitions?

A practical example helps. Suppose a child can explain ideas beautifully out loud but gets tangled in written tasks. A stronger verbal reasoning result might suggest the child has the thinking ability but may be running into output or literacy barriers in class.

Quantitative battery

The quantitative battery is often misunderstood. Parents hear “quantitative” and assume it’s a maths achievement test. It isn’t. It focuses on abstract numerical reasoning.

Mercer’s CCAT description identifies three subtests:

Subtest | Question count | Time |

|---|---|---|

Number Analogies | 14 to 18 | 10 minutes |

Number Puzzles | 10 to 16 | 10 minutes |

Number Series | 14 to 18 | 12 minutes |

These tasks ask the child to see patterns, relationships, and logical rules in numbers. A student may be fine with classroom arithmetic and still struggle here if abstract patterning is hard. Another student may find this section easier than routine worksheet maths because they’re strong at spotting underlying structure.

Think of the quantitative battery as pattern detection with numbers, not a check of whether your child memorised yesterday’s lesson.

Nonverbal battery

The nonverbal battery often gives the clearest window into reasoning when language is a complicating factor. The verified data notes that these subtests typically include 16 to 22 questions each, take about 10 minutes, and are especially useful for students with reading or language challenges.

This battery uses figures, shapes, spatial patterns, and visual relationships. A child might need to identify what shape completes a sequence or how one figure changes into another.

That matters for students who are:

English language learners

Strong thinkers with weaker reading fluency

Students whose expressive language doesn’t reflect their reasoning

Children who shut down when language feels heavy

What the time limits tell us

Every battery includes speeded elements. That means the CCAT doesn’t only tap reasoning. It also places that reasoning under a clock.

This is why some students leave questions blank even when they are capable of solving them with more time. The score still tells you something useful, but it tells you something about performance under structured, timed conditions. It isn’t a pure measure of potential detached from context.

A helpful way to think about the batteries is this:

Verbal asks, “How do you reason with words?”

Quantitative asks, “How do you reason with number relationships?”

Nonverbal asks, “How do you reason with patterns and figures when language is reduced?”

When one battery stands out from the others, that difference often matters more than the headline number.

How to Interpret CCAT Scores and Profiles

A parent opens the report after dinner, sees rows of numbers, and feels their stomach drop. A teacher scans the same page before a meeting and wonders which number matters most. That reaction is understandable. CCAT reports can look technical, but they become much easier to read once you know what each score is trying to describe.

The first thing to remember is simple. A CCAT score is a snapshot, not a verdict. It shows how a child performed on one day, under timed conditions, compared with other students of a similar age or grade. Useful snapshot. Limited snapshot.

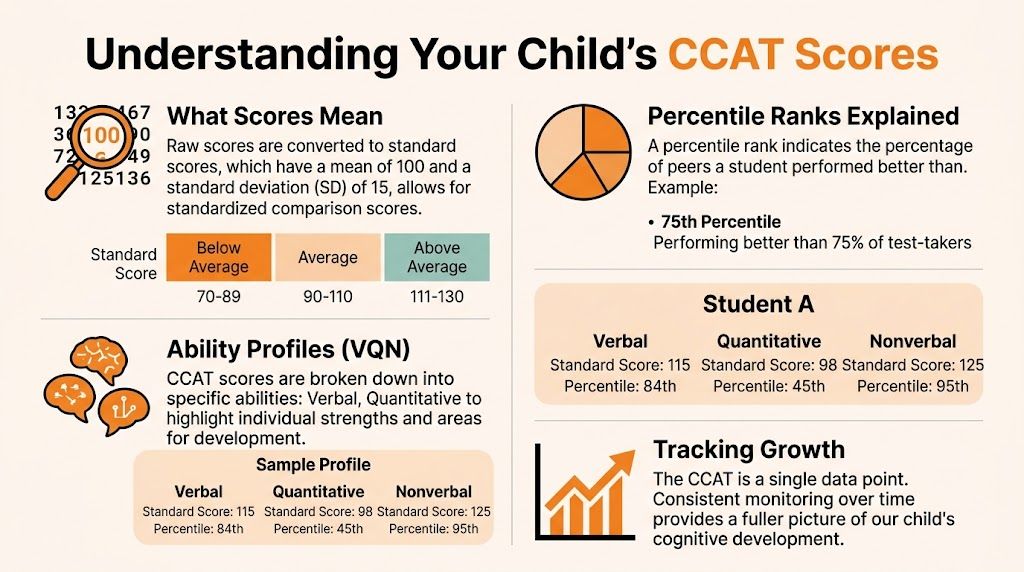

Start with the standard score

Many reports begin with a standard score. Parents often want to know whether that number is "good" or "bad," but that question usually leads people in the wrong direction. A standard score is best read as a position on a map. It shows where a child's performance fell relative to peers, with 100 representing the middle of the comparison group.

Percentiles often make this easier to grasp. If a child is at the 75th percentile, that does not mean they answered 75 percent of items correctly. It means their performance was at or above that of many students in the comparison group. That distinction clears up a lot of confusion.

For families who have also seen psychoeducational testing and want a clearer sense of how score language compares across assessments, this guide to the Wechsler Intelligence Scale for Children can help.

Stanines are a quick sorting tool

Stanines work like a nine-rung ladder. The middle rungs reflect average performance. The top rungs reflect performance above that of many peers. The bottom rungs suggest that school tasks in that area may require more effort, more support, or both.

They are less precise than a standard score, but they are often easier to scan in a meeting.

Understanding CCAT Stanine Scores

Stanine | Percentile Rank | General Performance Descriptor |

|---|---|---|

9 | 96th to 99th percentile | Very high |

8 | 89th to 95th percentile | Above average |

7 | 77th to 88th percentile | Above average |

6 | 60th to 76th percentile | Average |

5 | 40th to 59th percentile | Average |

4 | 23rd to 39th percentile | Average |

3 | 11th to 22nd percentile | Below average |

2 | 4th to 10th percentile | Below average |

1 | Below 4th percentile | Low |

A stanine does not tell you everything. It gives you a fast way to spot whether a score sits near the middle, above it, or below it.

APR and GPR answer different comparison questions

Some reports include APR and GPR. Those labels stand for Age Percentile Rank and Grade Percentile Rank. The difference matters.

APR compares a student with other children the same age. GPR compares that student with classmates in the same grade. For a younger child in an advanced grade, or an older child who started school later, those comparisons can tell slightly different stories. Neither one is more "true." They answer different questions.

This is also where adults can misread an otherwise normal pattern. A child may look average overall and still show a clear strength in one battery or a relative weakness in another. That is not inconsistent. It is the profile doing its job.

The profile usually matters more than the headline number

The total score draws attention because it is one number. Learning does not happen in one number.

A profile is more like the dashboard of a car than the speedometer alone. If you only check speed, you miss the fuel level, engine temperature, and warning lights. In the same way, a student can have an average overall result with a meaningful verbal strength, a nonverbal advantage, or a quantitative area that takes extra effort.

Patterns like these often matter most:

stronger nonverbal reasoning than verbal reasoning

stronger verbal reasoning than quantitative reasoning

relatively even scores across batteries

one area that is noticeably lower than the others

Those differences can point toward practical next steps. A child with stronger nonverbal reasoning may understand ideas best through models, diagrams, demonstrations, and visual structure. A child with stronger verbal reasoning may show more of what they know through discussion, explanation, and language-rich tasks. A child with an uneven profile may need instruction that uses strengths to support weaker areas, rather than a one-size-fits-all approach.

Ask two questions when you read the profile. What kind of thinking comes more naturally for this child? What kind of thinking seems to cost more effort?

That shift matters. It moves the conversation away from labels and toward planning.

Use the score to build a growth plan

The most helpful interpretation of a CCAT report connects the score to action. If the profile suggests a relative quantitative weakness, the next step might be more visual math models, slower pacing for multi-step problems, or a check on whether anxiety or processing speed is interfering. If the profile shows strong reasoning but classroom output is inconsistent, the school may need to look at attention, writing fluency, language demands, or executive functioning.

A score tells you where to look next. It does not tell you everything you need to know.

That is especially important for concerned parents and teachers. The CCAT can suggest how a child reasons under structured conditions. Growth planning comes from combining that snapshot with classroom work, teacher observation, parent input, and, when needed, follow-up assessment. Used that way, the report becomes more than a sorting tool. It becomes the start of a more complete picture of the child's learning path.

How Schools Use CCAT Results in Practice

A CCAT report becomes meaningful when adults connect it to actual school decisions. That’s where things can go well, or go sideways.

In many schools, the first use people think of is gifted screening. That’s understandable. The test is often administered for exactly that purpose. When students meet local criteria, schools may consider enrichment, alternate programming, or further review.

Gifted placement is only one use

Gifted identification gets the attention, but it isn’t the only practical use. A good school team may also use a CCAT profile to understand why a student’s classroom performance looks uneven.

Consider a few realistic school scenarios:

Student one writes complex stories, participates eagerly, and understands complex ideas in discussion, but struggles when maths becomes abstract and less concrete.

Student two says little in class, reads slowly, and seems hesitant, yet solves visual patterns and novel problems with unusual ease.

Student three earns decent grades through hard work, but the profile suggests one area of reasoning is taking much more effort than adults realised.

In those situations, the CCAT doesn’t replace teacher judgement. It sharpens it.

What teachers can do with a profile

The strongest use of the canadian cognitive abilities test is not “sorting kids” but planning instruction.

A teacher who sees a relative verbal strength might lean into oral explanation, discussion, debate, and explicit vocabulary work. A teacher who sees a nonverbal strength may use diagrams, manipulatives, graphic models, and visual examples before expecting verbal explanation. A student with a quantitative strength may benefit from logic-rich tasks and pattern-based entry points into learning.

Here are examples of differentiated classroom responses:

For a verbal strength

Offer think-alouds, verbal rehearsal, and chances to explain reasoning before writing.For a nonverbal strength

Use visual schedules, concept maps, figure-based modelling, and demonstration before text-heavy instruction.For a quantitative strength

Present patterns, sequences, and structured problem-solving tasks that let the student reason from relationships.For a relative weakness

Reduce the load in that area while still teaching it directly. A child with weaker verbal reasoning may still need rich language teaching, just with more scaffolding.

If you're trying to separate reasoning from what a child has already learned in school subjects, these distinctions become clearer alongside individual achievement tests, which focus more directly on academic attainment.

Support planning matters too

The most overlooked students are often those who “look fine” because grades are acceptable. A mismatched profile can show why a child is tired, frustrated, or inconsistent.

A useful CCAT conversation at school sounds like this: “What support or extension fits this pattern?” It should not sound like, “This number tells us who this child is.”

When schools use the report to open planning conversations, the CCAT can be constructive. When they use it only as a gatekeeping device, they miss much of its practical value.

Limitations and Ethical Considerations of the Test

The CCAT can be informative, but it has real limits. Any honest discussion of the canadian cognitive abilities test has to say that plainly.

First, it is a snapshot. One child may take the test on a good morning with solid focus and confidence. Another may take it while anxious, overtired, hungry, distracted by classroom noise, or rattled by the timed format. Those conditions matter.

A score is not a child

Children are more variable than test reports suggest. Some reason beautifully when talking through an idea but freeze on multiple-choice tasks. Some need extra processing time. Some are still learning English. Some have attention, language, or sensory differences that change how they approach the test.

That means over-interpretation is a real ethical risk.

A low area may reflect a genuine weakness. It may also reflect access issues, pacing issues, or discomfort with the format. A high area may reveal real strength, but it still won’t tell you whether a child can organise homework, manage frustration, collaborate well, or persist when work becomes boring.

The biggest application gap

One of the strongest critiques in the current CCAT conversation is not about whether the test can identify reasoning patterns. It can. The bigger problem is what schools do next.

A review from TestPrep-Online’s CCAT practice test page argues that a critical gap is the failure to translate score profiles into practical differentiated instruction for regular classrooms. The same source notes that CCAT-7 also lacks updates for modern neurodiversity screening in Canada, with 15 to 20% of students described as possibly having unidentified cognitive imbalances.

That doesn’t mean the CCAT is useless. It means the report is too often treated as an endpoint when it should be the beginning of a more careful process.

Questions adults should ask before acting on the score

Was the child regulated on test day?

Does the result match classroom observation, or conflict with it?

Could language, reading, attention, or anxiety have affected performance?

Have we confused aptitude with achievement, or vice versa?

Are we using this score to support the child, or to label them?

For families trying to make sense of assessment terminology that schools sometimes use too casually, this guide to the language of assessment can be grounding.

Good assessment practice protects a child from being reduced to one number.

The ethical standard is simple. Use the CCAT with humility. Combine it with observation, academic data, family input, and where needed, fuller psychoeducational or clinical assessment.

Beyond the Score Augmenting Assessment with Modern Tools

A CCAT result can be helpful. But it is static. It captures performance at one point in time, in one format, under one set of conditions.

Children don’t learn that way.

A richer picture of cognitive health comes from looking at how a child functions across settings and over time. That means pairing traditional tools with more dynamic observation. Attention. Working memory. Processing speed. Executive function. Response patterns across repeated tasks. These are often the missing pieces when a static score doesn’t line up neatly with classroom reality.

Static score versus living profile

Think about the difference between a school photo and a video. Both show the same child. One gives you a frozen image. The other shows movement, pacing, expression, and change.

That’s the gap many families and educators feel after getting CCAT results. They know the report says something real, but not enough. They want to know what the student is like during learning, not just during one testing block.

Modern digital tools can help fill that gap when they are used ethically. They can make screening more frequent, reduce the “one bad day” problem, and show whether a skill is stable, improving, or variable. They can also help adults test whether supports are working.

Practical ways to use modern supports

This doesn’t require replacing school assessments. It means complementing them.

Some families and teachers use digital reasoning games and structured practice to build familiarity with patterns, pacing, and problem-solving habits. When a student needs support with homework habits rather than pure content review, a well-designed AI homework helper can also reduce friction and reveal where a child gets stuck in the learning process.

The value of newer tools is not novelty. It’s feedback.

Used thoughtfully, they can help answer questions like:

Does attention drop after a short period, or remain steady?

Is processing quick but error-prone, or careful but slow?

Does a child improve when tasks become more visual?

Are interventions helping the student become more efficient over time?

What parents and teachers should do next

If you’ve received a CCAT report, resist the urge to put it in one of two bins: “excellent” or “concerning.” Instead, turn it into a working document.

Do three things:

Read for patterns, not just rankings

Look for relative strengths and weaker areas.Compare the report with real life Ask whether the profile fits what teachers and parents observe.

Build a growth plan

Identify supports, classroom strategies, and follow-up assessment if needed.

The best use of the CCAT is not prediction. It’s direction.

A child’s learning journey is dynamic. Our assessment approach should be dynamic too.

If you want to move from a static test result to an ongoing, practical cognitive growth plan, Orange Neurosciences offers a helpful next step. Its tools support rapid cognitive profiling, progress tracking, and targeted intervention planning for families, educators, and clinicians who need more than a one-time score. You can explore the website or reach out by email to see how a fuller picture of attention, memory, executive function, and processing can support better decisions for your child or students.

Orange Neurosciences' Cognitive Skills Assessments (CSA) are intended as an aid for assessing the cognitive well-being of an individual. In a clinical setting, the CSA results (when interpreted by a qualified healthcare provider) may be used as an aid in determining whether further cognitive evaluation is needed. Orange Neurosciences' brain training programs are designed to promote and encourage overall cognitive health. Orange Neurosciences does not offer any medical diagnosis or treatment of any medical disease or condition. Orange Neurosciences products may also be used for research purposes for any range of cognition-related assessments. If used for research purposes, all use of the product must comply with the appropriate human subjects' procedures as they exist within the researcher's institution and will be the researcher's responsibility. All such human subject protections shall be under the provisions of all applicable sections of the Code of Federal Regulations.

© 2026 by Orange Neurosciences Corporation