Clinician's Guide to pearson q global for 2026

Apr 17, 2026

You finish a cognitive assessment, then the actual delay starts. Raw scores sit on paper protocols, normative tables need checking, and the report still has to be written in language a family, referral source, or insurer can use. For most clinicians, that administrative stretch is where time disappears and small scoring mistakes creep in.

That’s where pearson q global usually enters the conversation. It isn’t exciting in the way newer digital tools can be, but it has become a standard part of many assessment workflows because it tackles a very practical problem. It moves scoring, reporting, and examinee record management into one web-based system.

In day-to-day practice, the value question isn’t “Is it digital?” It’s “Does it make the work cleaner without creating new friction?” That answer depends on your caseload, your comfort with cloud systems, your privacy obligations, and whether you need formal test reporting or a more adaptive cognitive care workflow. If your current process still depends on paper binders and scattered files, it also helps to tighten the surrounding habits, including effective digital note-taking strategies that make pre-assessment observations, behavioural notes, and interpretation easier to retrieve later.

Clinics that want a broader digital workflow often also look at platforms built around integrated cognitive care rather than test-by-test administration, such as Orange Neurosciences’ clinical solution. But before comparing categories, it helps to understand what Q-global does well, where it creates trade-offs, and what it looks like in a real clinical day.

Moving from Paper Piles to Digital Precision

Paper assessment systems still work. Many of us trained on them, trust them, and can score them accurately. The problem is that they don’t scale well when referral volume rises, turnaround expectations tighten, or multiple clinicians need access to the same examinee record.

Q-global addresses that bottleneck by shifting the clerical side of assessment into a web platform. In practice, that means less time hunting for forms, recalculating derived scores, and retyping score tables into reports. The gain isn’t just convenience. It’s cleaner process control.

Where paper still slows the clinic

Paper workflows usually create friction in three places:

Scoring consistency: Manual conversions and lookups are vulnerable to transcription error.

Report assembly: Score tables often have to be copied into a separate report template.

Record retrieval: Prior assessments may sit in filing cabinets, local drives, or different offices.

A digital platform doesn’t solve interpretation. It does reduce avoidable clerical work around interpretation.

The most useful digital tools don’t replace clinical judgement. They remove the repetitive steps that distract from it.

What changes in daily use

For a neuropsychologist, developmental paediatrician, or rehab clinician, the practical shift is straightforward. You stop thinking in terms of “Where is the protocol?” and start thinking in terms of “Is this assessment licensed and ready to assign?” That’s a better question for a busy practice because it aligns with scheduling, supervision, and report delivery.

The clinicians who get the most from pearson q global usually aren’t the ones chasing novelty. They’re the ones trying to shorten the path from administration to defensible reporting while keeping records more organised.

Understanding Pearson Q-global's Core Purpose

pearson q global is a secure, web-based administration, scoring, and reporting hub for Pearson assessments. It’s best understood as a digital assessment library combined with a scoring engine and a reporting workspace. You’re not buying one all-purpose cognitive platform. You’re using an online environment to access specific Pearson tools under a digital licensing model.

Think of it as a processing centre

A practical analogy helps. Q-global is less like a single test and more like a processing centre for an assessment library. You bring in the response data, whether through digital administration or manual score entry, and the system handles the scoring logic and report generation.

That matters because the old process asks the clinician to do two different jobs at once:

administer and observe well

complete a large amount of clerical conversion work accurately

Q-global separates those tasks more cleanly.

According to Pearson’s product information, Q-global’s web-based architecture supports unlimited examinee data management and automated scoring from any internet-connected device, delivering detailed reports with normative data. Pearson also states that its algorithmic scoring reduces report generation time from an average of 45 to 60 minutes for paper-based methods to under 5 minutes with 99.9% accuracy, and that for Canadian healthcare providers, this can cut care plan development time by an estimated 40% (Pearson campaign page for Q-global products).

Why clinicians adopt it

The appeal is usually practical, not philosophical:

You need faster score output after administration.

You want one place for examinee records rather than scattered local files.

You rely on Pearson instruments and want digital scoring tied to current norms.

You need reporting support that can be incorporated into clinical documentation.

What it does not do

It’s important not to overstate the platform. Q-global doesn’t replace diagnostic reasoning, collateral integration, behavioural observation, or the narrative work of writing a useful report. It also doesn’t automatically make a clinic interoperable with the rest of its software stack.

In other words, it handles a specific slice of assessment work very well. Clinicians are happiest with it when they buy it for that slice, rather than expecting it to function as a full practice operating system.

Exploring Key Features for Clinicians

What makes Q-global useful in practice is the way three functional pillars work together. If you only look at the homepage description, it can sound abstract. In clinic use, the differences between these pillars matter because they affect scheduling, staffing, and how much of your old paper workflow you can keep.

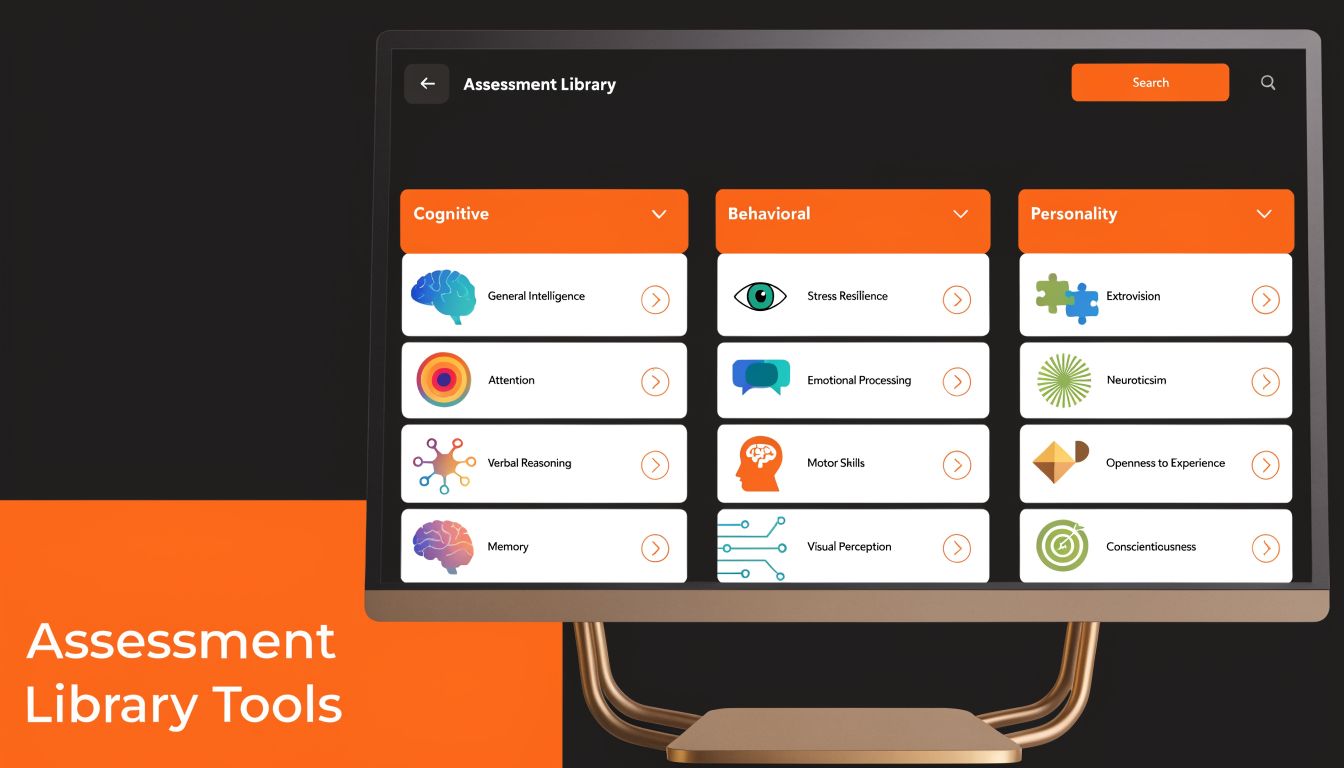

The assessment library

The first pillar is the library of available Pearson assessments. Some clinicians find this particular point confusing. Access to Q-global doesn’t mean open access to every instrument on the platform. Each assessment sits inside its own licensing structure.

That has a direct operational consequence. Before promising a digital administration path to a patient, school, or referral source, check that your clinic has the needed assessment and sufficient usage capacity. If one clinician assumes the test is “in the system” while another assumes it’s “already purchased,” the delay lands on the patient.

A practical example: a clinic may use Q-global heavily for one developmental or language measure while continuing to administer another instrument on paper because the digital workflow doesn’t fit that service line or because the clinic wants to conserve digital usage for specific referrals.

Administration modes

The second pillar is how the assessment gets delivered. In practice, clinicians tend to use Q-global in one of three ways:

On-screen administration: Best when the examinee is in clinic and the team wants a direct digital completion pathway.

Remote on-screen administration: Useful for telehealth workflows when the task and examinee are appropriate for remote delivery.

Manual entry: Often the most realistic bridge for established practices. The clinician administers with paper materials, then enters raw data for automated scoring.

Many mature practices often begin this way. They don’t digitise everything at once. They keep familiar administration methods and digitise the scoring layer first. For many teams, that’s the least disruptive adoption path.

For clinicians evaluating whether a broader online testing model fits their service design, this guide to cognitive assessment online is useful background because it frames the workflow questions that sit behind the technology choice.

Practical rule: Start with manual entry on high-volume assessments before moving your entire administration model online.

Scoring and reporting

The third pillar is the one clinicians usually value most after the first few uses. Q-global generates score reports that organise output into a form that can be reviewed, interpreted, and transferred into the clinical report.

That doesn’t mean the report writes itself. It means the platform gives you a cleaner base layer:

Reporting task | What Q-global helps with | What still depends on the clinician |

|---|---|---|

Raw score conversion | Automated scoring logic | Verifying unusual patterns and data entry |

Norm-based output | Structured score tables and report outputs | Interpreting significance in context |

Visual presentation | Tables, graphs, formatted summaries | Explaining functional meaning to families and referrers |

The mistake to avoid is treating the generated output as the final clinical product. The better use is to treat it as a reliable scaffold for stronger interpretation.

A Typical Clinician's Workflow in Q-global

A realistic Q-global workflow is often less dramatic than vendors make it sound. It’s mostly a sequence of small administrative actions that remove later friction.

Take a common telehealth scenario. A neuropsychologist needs to complete part of an assessment process with a client who lives in another city. The clinician logs in, creates or opens the examinee record, selects the appropriate assessment from the licensed library, and assigns it using the relevant administration mode.

What the workflow looks like in practice

From there, the work usually unfolds like this:

Create the examinee record using the clinic’s preferred data-minimisation approach.

Assign the assessment and confirm the administration format.

Send the access link or prepare the in-clinic device depending on the session model.

Conduct the session while monitoring comprehension, engagement, and test behaviour.

Review returned data in the clinician portal.

Generate the score report and pull relevant tables into the larger evaluation file.

If you’re comparing that with a more integrated care workflow model, how Orange Neurosciences works in practice is a useful contrast because it shows a different philosophy of assessment and follow-up.

Where the platform helps most

The strongest gain appears after administration, not during it. Once the assessment data comes back into the portal, the clinician can move directly into review instead of sitting down with manuals and conversion tables.

That has two benefits. First, turnaround gets shorter. Second, the clinician’s energy stays on interpretation, symptom patterning, and clinical communication rather than arithmetic and document formatting.

If a platform saves time but leaves you with more copying, renaming, and file chasing, it hasn’t improved the workflow enough.

Where friction still appears

The friction points are predictable. Remote sessions still need clear patient instructions. Clinicians still have to confirm that the selected administration mode suits the referral question. And when a clinic uses several digital systems, the Q-global output still has to be incorporated into the broader chart.

So the workflow is better, but it isn’t frictionless. That’s the right expectation.

Navigating Data Security and Canadian Compliance

For Canadian clinicians, the most important Q-global discussion isn’t convenience. It’s privacy governance. If you’re storing cognitive, behavioural, or developmental data in a cloud platform, you need to know how the information is protected and whether that protection aligns with your professional and legal obligations.

The technical safeguards

Pearson states that Q-global uses Advanced Encryption Standard (AES) 256-bit encryption for data at rest and Secure Sockets Layer (SSL) for data in transit. Pearson also notes its ISO 27001 Information Security Management accreditation, which strengthens the platform’s information security posture (Pearson whitepaper on Q-global and Q-interactive).

In practical terms, those details matter because clinicians aren’t just protecting names and dates of birth. They’re protecting sensitive clinical output that may influence diagnoses, school planning, treatment recommendations, disability documentation, and capacity-related decisions.

What Canadian clinicians should ask

Strong encryption is necessary, but it doesn’t end the compliance conversation. Under PIPEDA and provincial frameworks such as PHIPA, a clinic still has to do its own due diligence around data handling, storage arrangements, access controls, and contractual terms.

Questions worth asking a vendor or procurement team include:

Where is the data stored? Cross-border storage matters for many organisations.

What are the access controls? Role-based access and account hygiene are as important as encryption.

What does the agreement say about data use? Review the fine print before uploading identifiable health data.

How does the platform support auditability? You want to know who accessed or modified records.

If your organisation is reviewing vendor terms or building internal governance language, a plain-language healthcare data usage agreement can be a useful reference point for the kinds of operational questions that should be clarified before adoption.

The Canadian concern is real

Pearson-linked resource material highlights a real concern in Canada. A 2025 Canadian Psychological Association survey reported that 68% of neuropsychologists in Ontario and British Columbia were worried about cross-border data storage for cloud tools like Q-global (Pearson Clinical Australia resources page referencing the survey).

That concern is reasonable. A secure platform can still create organisational discomfort if the storage model, transparency, or vendor documentation doesn’t line up with local privacy expectations. Canadian clinics that want examples of region-specific digital brain health expansion and implementation context may also find this Canada-focused update from Orange Neurosciences and .CA relevant to the broader conversation.

The practical takeaway is simple. Don’t stop at “encrypted.” Ask how the system fits your actual compliance environment.

Billing Licensing and Integration Explained

Q-global’s commercial model catches some first-time users off guard because it doesn’t behave like a standard flat software subscription. In many settings, it works as a pay-per-use credit system tied to assessment activity and report generation.

How the licensing logic feels in real life

That model has advantages. A clinic with modest volume doesn’t have to commit to a large enterprise structure to get started. It can buy access for the specific assessments it uses and align spending more closely with caseload.

The downside is administrative. Someone in the practice has to monitor usage, know which reports consume what kind of credits, and avoid the unpleasant surprise of running short just before a scheduled assessment block.

A practical way to manage it is to assign one person to three recurring checks:

Assessment availability: confirm the clinic has the needed instrument

Credit status: review remaining usage before a busy week

Report planning: decide whether the planned output requires a basic or more detailed report type

Integration is usually the weak spot

Many clinicians come to view the platform more realistically. Q-global is often strongest as a standalone assessment environment. It doesn’t always function like a seamlessly integrated part of the clinic’s EMR or EHR.

In practice, the usual workflow is simple but manual. You generate the report, download it, and then upload or summarise the relevant content in your main charting system. That’s manageable in a small practice. In a larger service, repeated manual transfer can become one more clerical layer.

The cost question isn’t only what you pay the vendor. It’s also how much staff time the surrounding workflow still consumes.

If your clinic values highly connected systems, that standalone design may feel dated. If your priority is reliable scoring of established Pearson tools, it may be perfectly acceptable.

Q-global vs Modern Alternatives A Measured Comparison

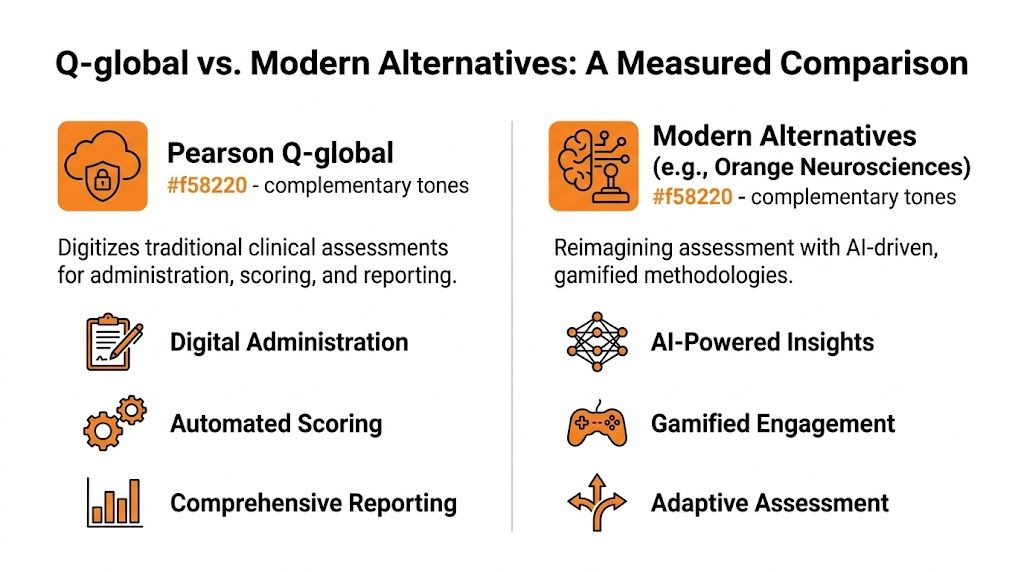

The fairest comparison isn’t “old versus new.” It’s purpose-built digital assessment versus reimagined cognitive care. Q-global digitises established assessment workflows. Modern platforms such as Orange Neurosciences often start from a different premise. They’re built for rapid cognitive profiling, progress monitoring, and tighter linkage between assessment results and next-step intervention.

The key difference is clinical job fit

Q-global is usually the right tool when the clinician needs access to a specific legacy Pearson instrument and wants digital scoring and reporting wrapped around that instrument. It fits formal evaluations, established referral channels, and clinics whose documentation process depends on named tests with familiar interpretive structures.

Modern alternatives often fit a different job. They’re useful when the practice wants faster screening, repeated measurement, patient-friendly interfaces, or a more continuous model that connects assessment output to follow-up training or rehabilitation steps.

Orange Neurosciences, for example, is described by the publisher as an AI-powered, evidence-based platform that provides objective cognitive profiles in under 30 minutes and supports targeted therapy and reassessment. That isn’t the same category of product as Q-global. It’s closer to an integrated cognitive care workflow than a digital container for legacy tests.

Platform Comparison Q-global vs Orange Neurosciences

Criterion | Pearson Q-global | Orange Neurosciences |

|---|---|---|

Primary role | Digital administration, scoring, and reporting for Pearson assessments | AI-powered cognitive assessment and targeted therapy workflow |

Best fit | Formal use of established named instruments | Rapid screening, monitoring, and ongoing cognitive care |

Interaction model | Test-specific administration and report generation | Integrated assessment plus intervention support |

Workflow style | Often standalone, then exported into broader charting | Built around real-time decision support and continuity |

User experience emphasis | Clinical utility and standardisation | Engagement, accessibility, and scalable follow-up |

Ideal user question | “I need this specific test scored and reported properly.” | “I need fast cognitive insight and a path to action.” |

What works well and what doesn’t

Use Q-global when:

You need a recognised Pearson assessment for a formal report.

Your clinicians already work within those test frameworks.

You want scoring automation without redesigning your entire service model.

Look elsewhere, or at least compare carefully, when:

You need stronger workflow integration across assessment and intervention

You want repeated cognitive monitoring without heavy test-by-test administration

You care a lot about patient engagement and a less traditional testing feel

Neither category cancels out the other. Some clinics will use both. The important thing is to match the tool to the clinical question rather than forcing one platform to do a job it wasn’t built to do.

Answering Common Clinician Questions

Even after a full review, a few questions tend to come up repeatedly in peer discussions and implementation meetings.

Can I use Q-global without changing my whole assessment style

Yes. For many practices, the most sensible starting point is partial adoption. Clinicians keep familiar paper administration where that still works well and use Q-global primarily for score entry, automated output, and record management.

That approach is often easier on staff training and less disruptive to established supervision habits. It also gives the team time to decide which parts of the workflow derive benefit from going fully digital.

Is the privacy concern mostly theoretical in Canada

No. It’s a live operational issue. As noted earlier, a 2025 Canadian Psychological Association survey found that 68% of neuropsychologists in Ontario and B.C. were concerned about cross-border data storage for cloud tools like Q-global. That’s why clinics shouldn’t rely on generic reassurances. They should request clear answers on storage, access, and PIPEDA alignment from the vendor or procurement channel.

A related issue in longitudinal assessment is measurement consistency. If your team is thinking about repeated digital administration over time, this explainer on test-retest reliability in cognitive assessment is worth reviewing because the technology choice is only one part of valid follow-up measurement.

What’s the biggest implementation mistake clinics make

They assume the software choice is the workflow. It isn’t. The essential work is deciding who creates examinee records, who monitors licensing, how reports move into the chart, what identifiers are used, and how privacy review is documented.

A clinic that settles those decisions early usually adapts well. A clinic that skips them ends up blaming the platform for problems caused by inconsistent internal process.

If you’re weighing pearson q global against a more modern cognitive care model, Orange Neurosciences is worth a closer look. Its platform is built for rapid cognitive assessment, real-time clinical decision support, and targeted therapy workflows that go beyond static report generation. For clinics that want a faster, more integrated path from assessment to action, visit the website or reach out by email to explore whether it fits your setting.

Orange Neurosciences' Cognitive Skills Assessments (CSA) are intended as an aid for assessing the cognitive well-being of an individual. In a clinical setting, the CSA results (when interpreted by a qualified healthcare provider) may be used as an aid in determining whether further cognitive evaluation is needed. Orange Neurosciences' brain training programs are designed to promote and encourage overall cognitive health. Orange Neurosciences does not offer any medical diagnosis or treatment of any medical disease or condition. Orange Neurosciences products may also be used for research purposes for any range of cognition-related assessments. If used for research purposes, all use of the product must comply with the appropriate human subjects' procedures as they exist within the researcher's institution and will be the researcher's responsibility. All such human subject protections shall be under the provisions of all applicable sections of the Code of Federal Regulations.

© 2026 by Orange Neurosciences Corporation